Mariam B and Jenny

1. Repository

2. Overview

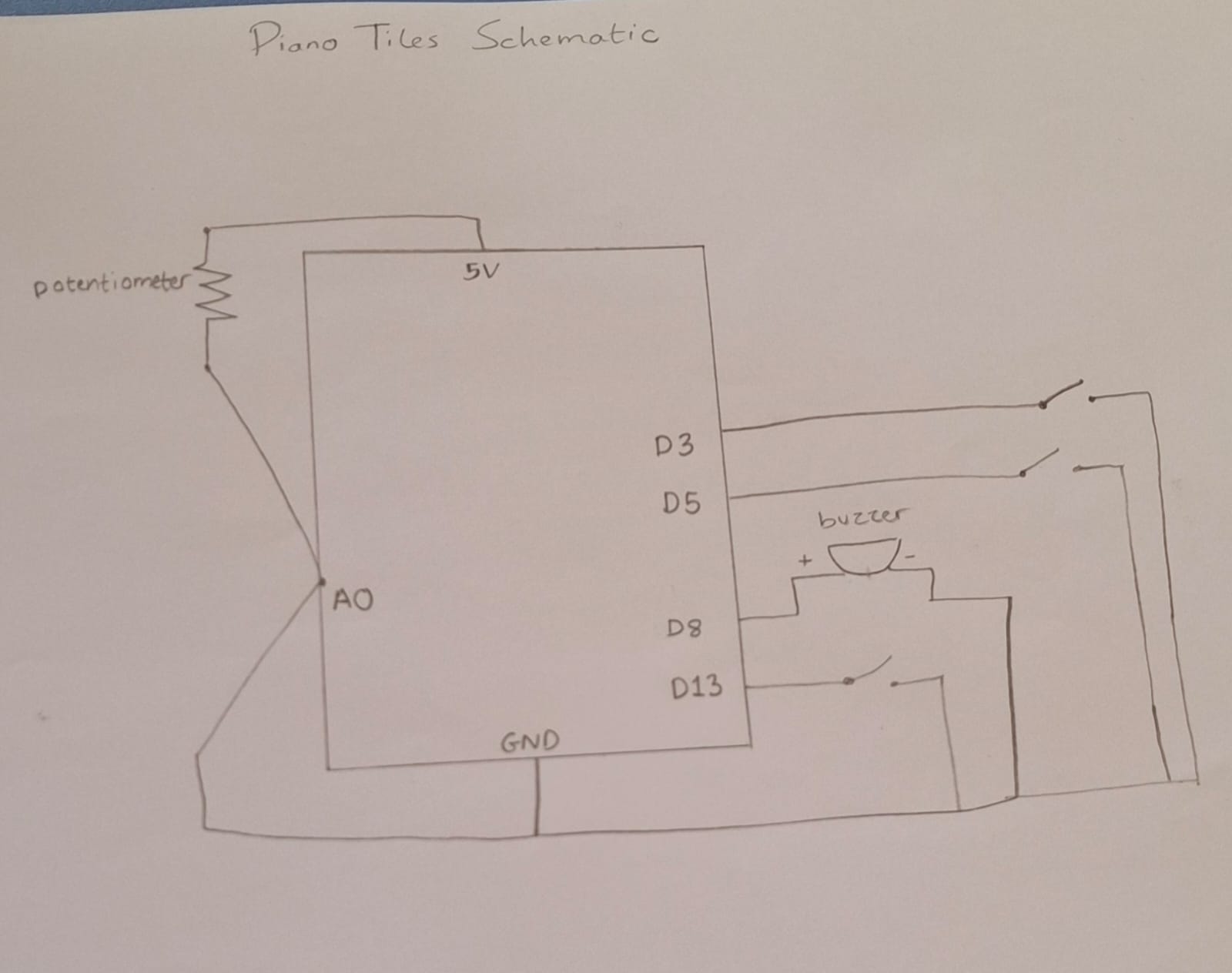

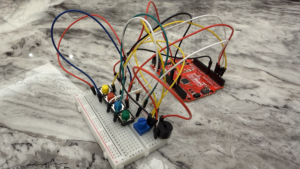

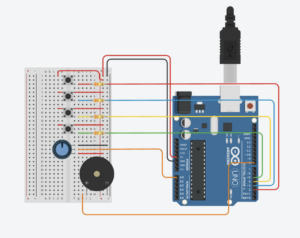

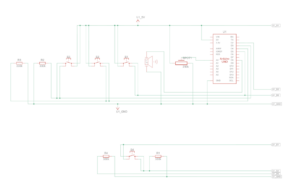

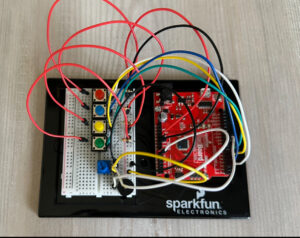

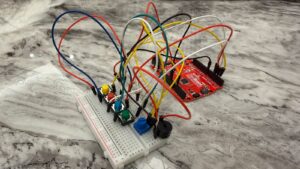

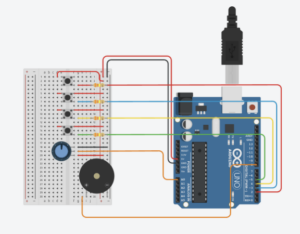

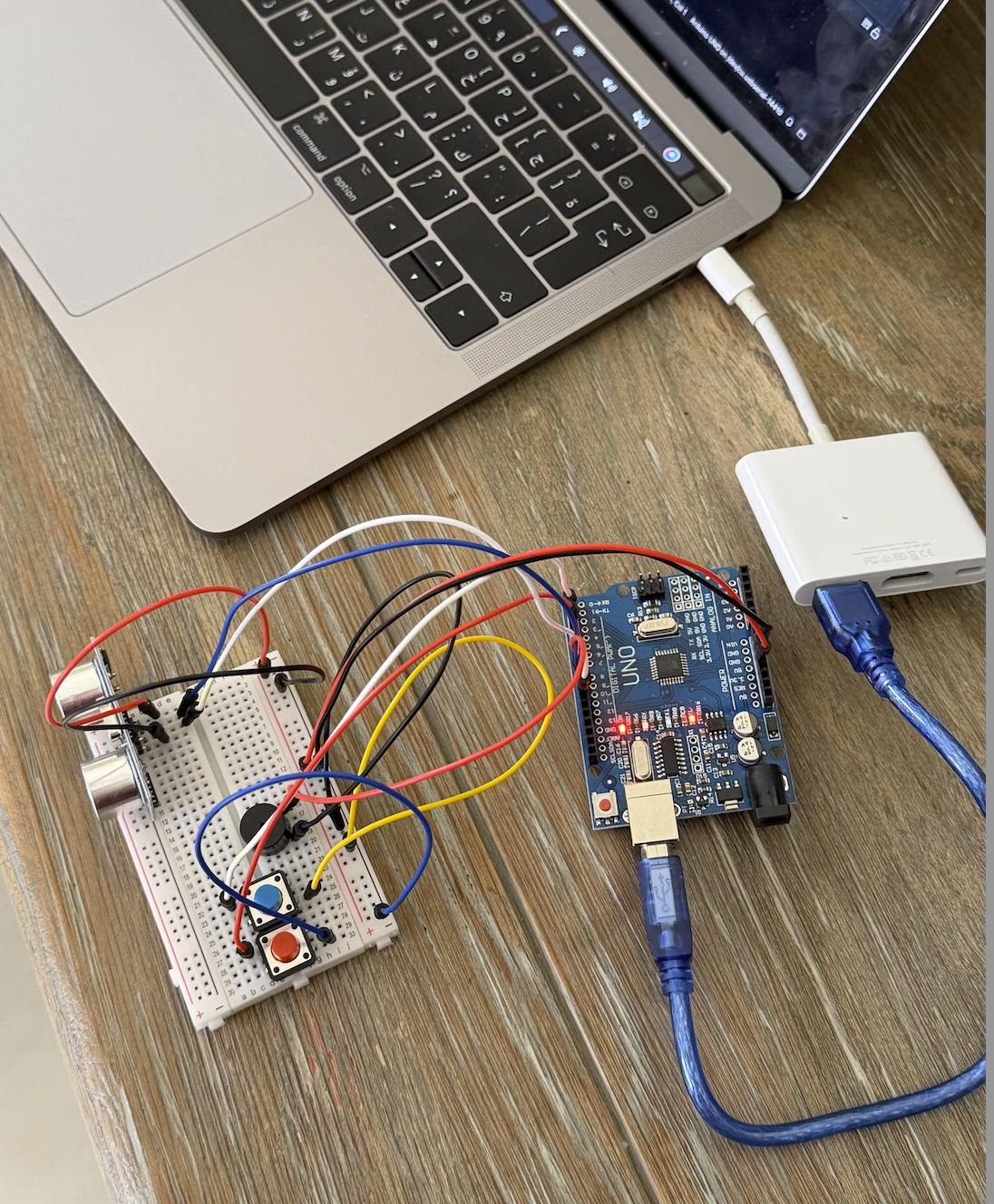

This project is an interactive electronic musical instrument. An ultrasonic distance sensor acts as a pitch controller — the player moves their hand closer or further away to select notes from a pentatonic scale. A potentiometer controls the tempo of the beat. A toggle switch changes between two musical modes: a steady melodic mode where every beat plays the note selected by the player’s hand, and a drum-style alternating mode where deep and light tones trade off rhythmically. All sound is produced through a piezo buzzer.

3. Concept

The concept was inspired by the Theremin — a classical electronic instrument played without physical contact, where the performer’s hand position in the air controls the pitch of the sound. Rather than pressing discrete keys to trigger notes, the player sculpts sound by moving their hand through the air above the ultrasonic sensor. This creates a continuous, expressive range of input that feels intuitive and physical at the same time. The toggle switch gives the instrument two distinct personalities — one melodic and one percussive — while the potentiometer lets the player set the energy of the performance by controlling how fast the beat moves.

-

- Note on AI assistance: The wiring configuration for this project was determined with the help of Google Gemini. The circuit concept was described to Gemini and it provided wiring instructions for the components.

4. Process and Methods

-

- The ultrasonic distance sensor fires a 10-microsecond pulse and measures how long the echo takes to return. A helper function microsecondsToCentimeters() converts that time into a distance in centimeters. The distance is then mapped to an index in the scale[] array using map(), so each position of the hand corresponds to a specific note.

- The scale[] array uses note definitions from a pitches.h file and is limited to a two-octave pentatonic scale — C, D, E, G, A across octaves 4 and 5. This removes dissonant half-steps so the instrument always sounds in key regardless of where the hand is placed.

- The potentiometer is read using analogRead and mapped to a time interval between 150 and 800 milliseconds, which controls how frequently the beat fires.

- A non-blocking timer using millis() handles beat timing instead of delay(). This keeps the Arduino continuously reading the sensors between beats so the instrument feels responsive and live. A beatStep counter increments on every beat and is used by the alternating mode to separate even and odd beats.

- The toggle switch selects between the two musical modes and uses INPUT_PULLUP so no external resistor is needed.

5. Technical Details

-

- The pitches.h file defines note names as frequency constants, allowing the scale array to be written in readable musical notation rather than raw numbers:

// ── MUSICAL SCALE ──

// A two-octave pentatonic scale (C, D, E, G, A across octaves 4 and 5)

int scale[] = {NOTE_C4, NOTE_D4, NOTE_E4, NOTE_G4, NOTE_A4,

NOTE_C5, NOTE_D5, NOTE_E5, NOTE_G5, NOTE_A5};

int numNotes = 10;

-

- unsigned long is used for the timer variables because the millis() counter grows very quickly and would overflow a standard int (maximum 32,767) after only about 32 seconds:

unsigned long startMillis = 0;

unsigned long currentMillis = millis();

-

- The distance sensor trigger sequence follows the standard HC-SR04 pattern — a brief LOW to clear the pin, a 10-microsecond HIGH pulse to fire the sensor, then LOW again to listen:

// ── ULTRASONIC SENSOR TRIGGER SEQUENCE ── // Pull the trigger pin LOW briefly to ensure a clean starting state digitalWrite(distPin, LOW); delayMicroseconds(2); // Send a 10-microsecond HIGH pulse to fire the ultrasonic burst digitalWrite(distPin, HIGH); delayMicroseconds(10); // Pull LOW again — the sensor is now listening for the echo digitalWrite(distPin, LOW); // Measure how long the echo takes to return, in microseconds. // The 10000 timeout prevents the program from freezing if no object is close enough to reflect the pulse — it returns 0 instead. duration = pulseIn(echoPin, HIGH, 10000);

-

- The distance is mapped starting from 2cm rather than 0 because the HC-SR04 has a physical blind spot below approximately 2 centimetres where readings are unreliable. The upper limit of 50cm keeps the performance zone compact and ergonomic:

int noteIndex = map(cm, 2, 50, 0, numNotes - 1);

-

- The alternating drum mode uses the modulo operator on beatStep to separate even and odd beats, assigning a fixed deep tone of 100Hz to even beats as a kick drum and the hand-controlled note to odd beats as a snare:

if (beatStep % 2 == 0) {

tone(buzzerPin, 100, 50);

} else {

tone(buzzerPin, currentNote, 50);

}

6. Reflection

This project pushed us to think about musical interaction as much as electronics. The biggest technical challenge was replacing delay() with the millis() timer — understanding why the Arduino had to keep running between beats, rather than pausing, took some time but made the instrument feel genuinely alive and responsive in a way that a delay-based version couldn’t. The decision to use a pentatonic scale rather than mapping the full chromatic range was the single biggest improvement to how the instrument sounds; removing the dissonant notes made even accidental hand movements musical. The Theremin inspiration also shaped how the wiring was approached — describing the concept to Gemini and using its wiring instructions as a reference helped bridge the gap between the interaction idea and the physical circuit. If we were to extend this, we could add a second switch to select between different scales, a visual indicator showing the active mode, and potentially an LCD display to show the current tempo and note being played.

7. Resources

-

- https://pyserial.readthedocs.io/en/latest/

- https://docs.arduino.cc/built-in-examples/sensors/Ping/

- Google Gemini — used for wiring guidance