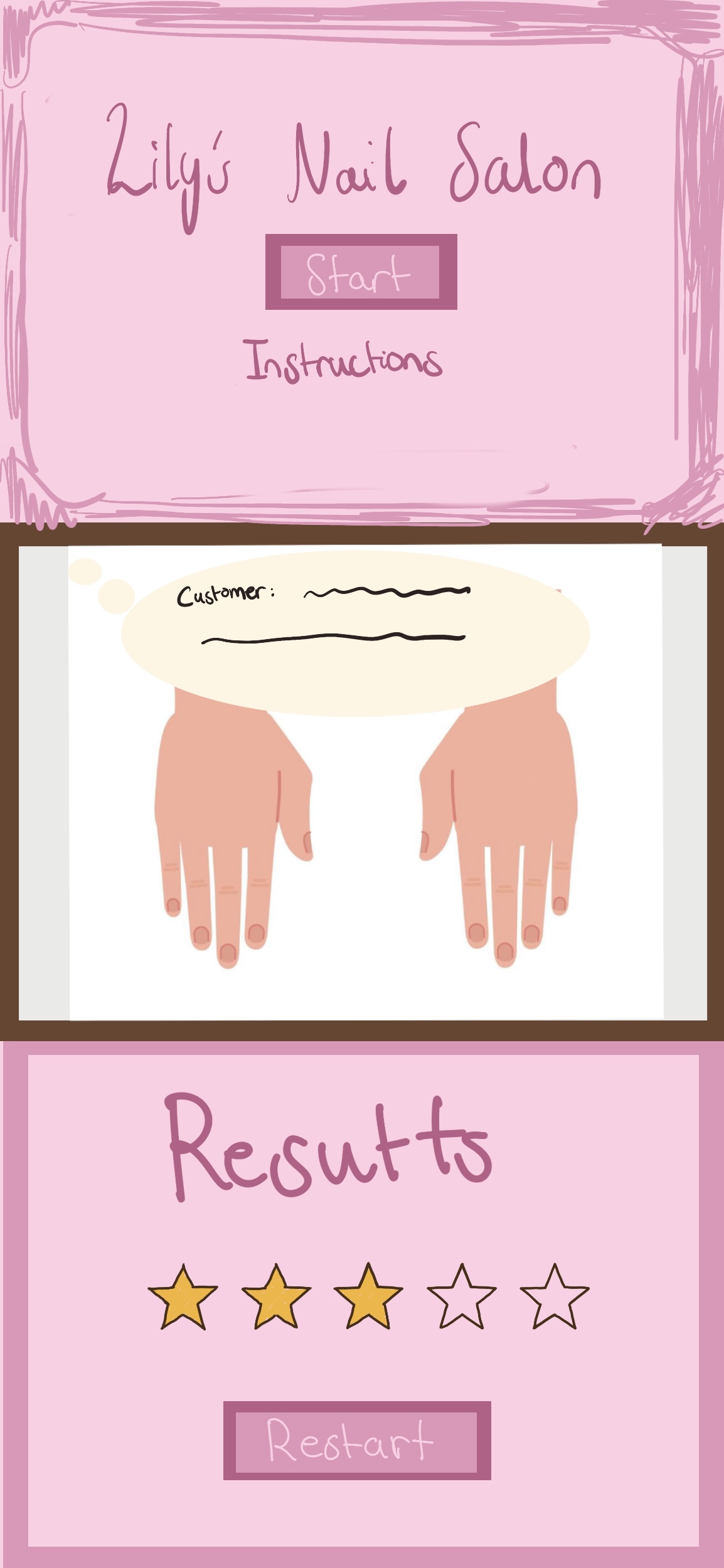

For my midterm project, I made an interactive nail salon game in p5.js called Salama’s Nail Salon. The idea of the game is that a customer gives a short line that hints at the kind of color they want, and the player has to choose a nail polish color that matches it. I wanted it to feel simple and cute, but still have actual game logic behind it, so I used mood-based color matching instead of making it just about choosing any random color. Then, the player uses a hue slider to test different shades, and the game gives a result out of five stars depending on how close the chosen color is to the customer’s intended mood.

To build the project, I first split it into three main screens, which helped me organize the whole game better. I made a start screen with instructions, a playing screen where the customer gives their line and the player chooses a nail color, and a result screen that gives feedback and has a restart button in case the player would like to try again. Structuring the project this way made it much easier for me to understand the logic of the game and the logic of the code.

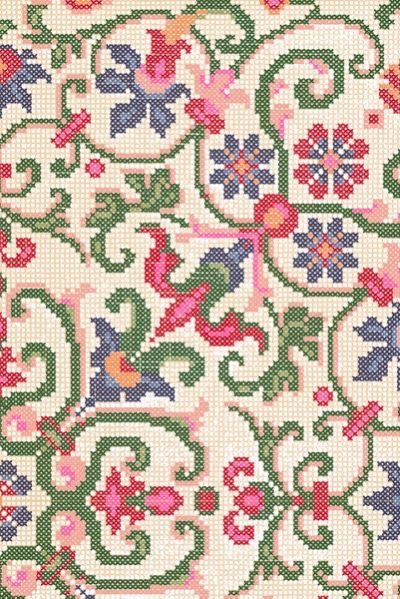

For the visuals, I used AI to produce my background image of a nail salon, and I added a font and soft pink buttons so the overall style fit a nail salon game. One part I spent a lot of time on was the nail itself. Instead of drawing the full finger, I used a finger image and drew a nail shape with the chosen color directly on top of it so the player could see the polish update on the actual nail. This took a lot of trial and error, because the shape had to be positioned and rounded carefully to fit inside the nail correctly. I also added a glossy highlight so it looked more like actual nail polish instead of just a flat shape.

I am especially proud of the result system, because the result compares the chosen color to the target color for the customer’s mood and then gives a star rating from one to five. At first, I was showing more technical feedback, like how far the player was from the target color, but when I made my sister Salama try the game, I realized that the results did not fit the tone of the game very well. Since the game is meant to feel playful and easy to understand, I changed the result screen so it gives simple reactions and color-based hints instead, and I think this made the game feel much more natural and kid-friendly. However, I did use AI to help with this part, and I explain exactly how I used it at the end of my blog.

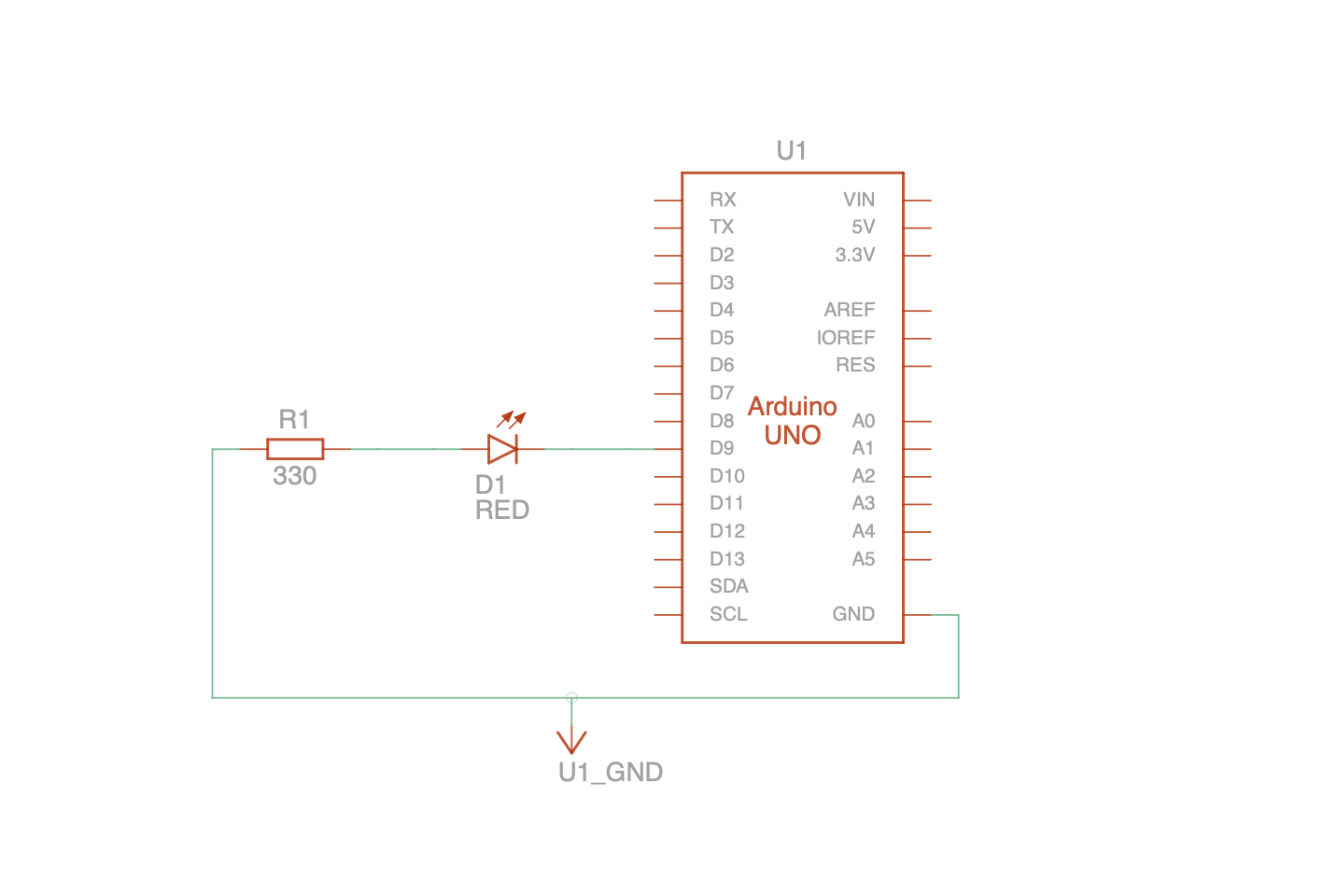

function resultFeedback() {

if (stars === 5) {

resultTitle = "Perfect match!";

resultHint = "The customer loved this color.";

} else if (stars === 4) {

resultTitle = "So close!";

resultHint = colorHint(correctMood);

} else if (stars === 3) {

resultTitle = "Nice try!";

resultHint = colorHint(correctMood);

} else if (stars === 2) {

resultTitle = "Not quite yet!";

resultHint = colorHint(correctMood);

} else {

resultTitle = "Oops!";

resultHint = "That color was far from what the customer wanted.";

}

}

function colorHint(mood) {

if (mood === "Happy") {

return "The customer wanted a brighter, sunnier color.";

} else if (mood === "Calm") {

return "The customer wanted a softer, more bluish color.";

} else if (mood === "Confident") {

return "The customer wanted a bolder, more purple color.";

} else if (mood === "Sad") {

return "The customer wanted a deeper, darker color.";

} else {

return "Try a different shade next time.";

}

}

Another part I am happy with is that I included multiple customer moods and randomized phrases, so the game does not feel exactly the same every time. I also used object-oriented programming by making a Customer class. Each round creates a customer object that stores the customer’s mood and phrase, and this helped me organize the dialogue part of the game more clearly. I used arrays of phrases for each mood and randomly selected one for each round. I also used buttons, a slider, text, images, shapes, and sound, so the final sketch combines a lot of the tools we have learned. Even though the game looks simple, there is a lot of structure behind it, and I’m really proud of how I organized it.

One challenge I ran into was figuring out how to make the game feel visually complete without making it too complicated. I originally was not sure how to show the nail color in a way that looked convincing, and I had to test many different values before the color shape finally fit the nail properly. Another challenge was deciding what kind of feedback to show on the result screen. I did not want it to feel too technical, so I had to rethink the wording and make it fit the tone of the game better. I also had to think a lot about spacing and layout so the nail image would not overlap with the hue spectrum and the controls.

AI Use:

I used AI in two specific parts of this project. First, I used AI to generate the background image for the game. I explained that I wanted a background image for a nail salon game, described how I wanted it to look visually, and explained that it would be used across all of the screens in the game. I generated the AI background image when I was working on the midterm progress stage, and I decided to keep it because it matched the theme I wanted better than the images I found online. I chose to do this because I wanted a background that looked soft, cute, and clearly related to a nail salon.

The second place where I used AI was for the wording of the result screen. My original result feedback system was much more technical. In my code, the result was based on comparing the player’s chosen hue to the target hue for the customer’s mood. I used functions like hueDistance() to measure how close the chosen color was to the target color, starsFromDistance() to convert that distance into a star rating from one to five, and computeResult() to calculate the final result. Because the logic of the system was based on color distance, my first version of the result screen also showed that technical logic more directly. It included the star result, the predicted mood, and feedback about how far the chosen hue was from the target hue. From a coding perspective, that made sense to me because it matched how I had built the scoring system.

However, after I had my sister Salama test the game, I realized that this version of the result feedback did not fit the tone of the project very well. She did not really understand what the technical feedback meant in the context of a playful nail salon game, and it felt too logic-based for the kind of experience I wanted. Because of that, I used AI specifically to help me rethink how the result should be communicated to the player. AI helped me revise the wording and presentation of the result. I changed the result screen in the code by replacing the more technical feedback with the variables resultTitle and resultHint, and by adding the functions resultFeedback() and colorHint(mood) to generate simpler and more natural feedback for the player. Instead of showing technical information, the result screen now gives simpler reactions such as “Perfect match!” or “Nice try!” and follows them with clearer color-based hints, such as saying the customer wanted something brighter, softer, darker, or more bluish. So my logic for the result system stayed the same, but AI helped me change the way that result was expressed, so it made more sense to the player and matched the tone of the game better. I also then edited it and added phrases on my own, and kept fixing or changing the wording.

Resources:

My font: https://fonts.google.com/specimen/Emilys+Candy?categoryFilters=Feeling:%2FExpressive%2FCute&preview.script=Latn

My Background Sound: https://pixabay.com/music/beats-chill-lofi-music-background-358420/

Images used:

I used p5.js library and p5.sound library

I used the course slides to copy parts like input, fullscreen, and window resizing. I also used ideas from the class for structuring the game into different screens and organizing the game logic.

I used AI to generate the background image, and to fix the results feedback.

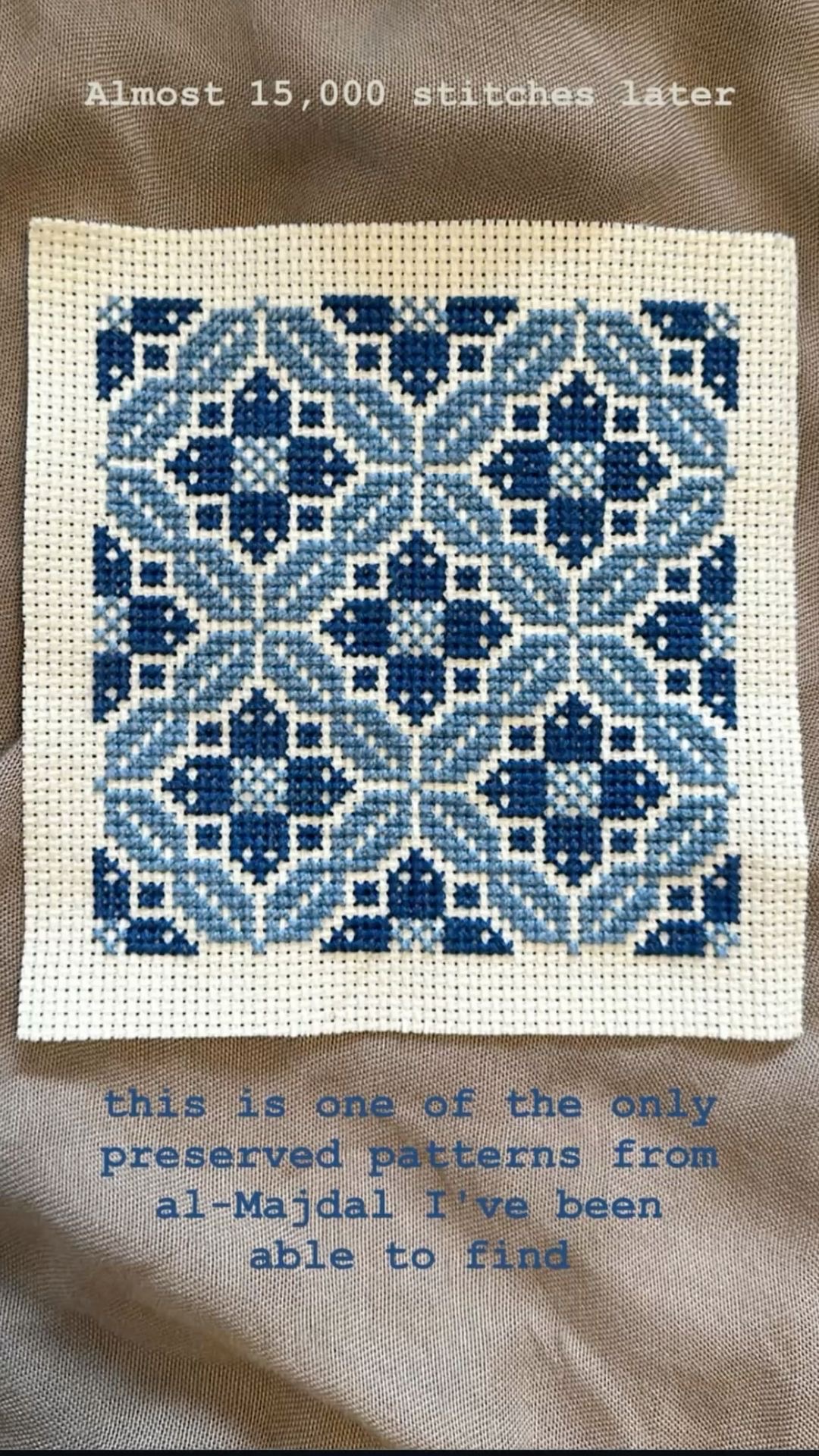

My sister Salama was the main testing participant and also the inspiration for this game, which is why I felt it was fitting to call it Salama’s Nail Salon.

I also received major help from my brother with the coding logic of the game. I had it structured weirdly, and he helped me understand how to organize it.

game demo

game demo