Crack the Code!

In this project, I’ve developed a “Crack the Code” puzzle to unlock a wooden box. The user receives four sets of hints to guess the three-digit code required to open the safe. Using the knob and button on the box, the user inputs each digit of the code. When the correct code is entered, the box unlocks, and the user wins the chocolate candies inside! Until the correct code is guessed, the box remains locked.

Video Demonstration of the Project

Interaction Design: The user is initially provided instructions on how to interact with the box through the computer screen. The red button on the box not only enters digits but also serves as a “start” and “restart” button for the game, functioning as a navigation button.

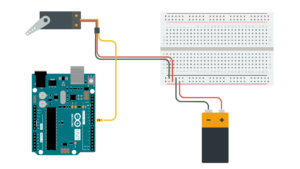

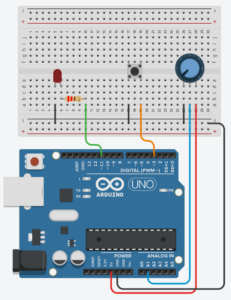

Arduino Sketch: My implementation involves using a servo motor, potentiometer, and button. The servo motor locks and unlocks the box by turning to 90 degrees when locked and 0 degrees when unlocked. The potentiometer changes the digits when the user inputs the code, mapping different resistances to digits from 0 to 10 (mapped to 0-9 initially, but because of display issues, extended to 10). The button navigates the game or inputs digits based on the game state. These data are sent to p5.js, which in turn determines whether to open the box or not.

const int servoPin = 9;

const int buttonPin = 3;

const int potentioPin = A1;

const int ledPin = 11;

#include <Servo.h>

Servo myservo;

int potValue;

bool locked = true;

int digit;

void setup() {

pinMode(ledPin, OUTPUT);

digitalWrite(ledPin, HIGH);

delay(1000);

digitalWrite(ledPin, LOW);

myservo.attach(servoPin);

pinMode(buttonPin, INPUT_PULLUP);

Serial.begin(9600);

// Always lock the safe at the beginning of the program

unlock();

delay(2000);

lock();

}

void loop() {

// Check if data is available to read from serial

if (Serial.available() > 0) {

// Read the incoming data

String receivedData = Serial.readStringUntil('\n');

// Print received data to the Serial Monitor

Serial.println(receivedData);

// Check if the received data matches 'true' (to unlock)

if (receivedData == "true") {

unlock(); // Unlock the safe

} else if (receivedData == "false") {

lock(); // Unlock the safe

}

}

int buttonState = digitalRead(buttonPin);

potValue = analogRead(potentioPin);

digit = map(potValue, 0, 1023, 0, 10);

// Print values to Serial Monitor in a single line

Serial.print(digit);

Serial.print(", ");

Serial.print(buttonState == LOW ? "false" : "true"); // Check if button is pressed

Serial.print(", ");

Serial.println(locked ? "true" : "false");

}

void lock() {

myservo.write(0); // Set the servo to lock the safe (position 0)

locked = true; // Update the locked status

}

void unlock() {

myservo.write(90); // Set the servo to unlock the safe (position 90)

locked = false; // Update the locked status

}

P5.js Sketch: The primary instructions and hints are presented to the user in the p5 sketch. There are three different code sets, one of which is chosen as the code to open the box at the game’s start. I was initially planning to code different sets of hints and it was a difficult process, I changed it to three sets of codes for each game round. For wrong inputs, there’s sound and visual feedback. I aimed for fun and engaging screen slides, including real box images for clear user instructions.

The p5 sketch receives data from the Arduino potentiometer to display corresponding digits. When the game state ends, it sends a signal to Arduino to open the box, and the servo motor complies.

In this project, I’m particularly proud of the box I’ve created. Despite the door hinges don’t function perfectly as intended, the box maintains a good overall shape. The box is made out of ply wood. Creating the box involved learning Adobe Illustrator to sketch precise and accurate measurements for the wooden parts, which was a challenging but rewarding process.

For future improvements, placing the Arduino board inside the box is important thing to do. It was part of the initial plan, but due to incorrect box measurements the Arduino couldn’t fit in the box. Moreover, improving the box door for sturdiness is crucial. I am also considering to add background sounds for screen feedback interactions and that would enhance the overall experience.

Overall, the project was an enjoyable and rewarding process, and I learned a lot more about merging creative design, technical use of Arduino and P5.js, and problem-solving to deliver an engaging and interactive experience.