Will be using this blog post to gradually write the blog post instead of all at once:

PROGRESS:

- Arduino wiring: DONE

- Arduino code: DONE

- UI

- p5 code communication

30/04/26

I tried connecting my accelerometer to my breadboard without soldering. I placed my headers into the breadboard, placed the accelerometer on top, and connected the female end of a jumper wire on top. I downloaded the relevant libraries and wrote the code from this page. Unfortunately, the Arduino showed no connection whatsoever (the code I had was designed to write in the serial “No ADXL345 sensor detected.” And indeed that was what my serial displayed for me. Now, I will be moving on to a different connectivity, building on the unusual switch and musical instrument assignments we made. This idea will still follow the same concept of composing music in an opera; if you compose incorrectly, a buzzer will sound, and the ballet dancers will fall. Instead of using an accelerometer to measure the x-y positions of the user’s hand, I will attach foils and wires to each finger on a glove. The connection between all the wire combinations will control a relevant aspect of the music, such as pitch, speed, note, and beats. This wiring idea was also heavily inspired by the emerging usage of TouchDesigner.

01/05/26

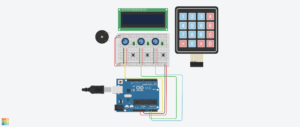

I decided to go on with my glove idea. I originally wanted to involve all 5 fingers, but then I realized that would complicate things. I stuck to using three. One would be in charge of playing the song, the second is in charge of speeding it up, the third is in charge of slowing it down. I tested out my wiring by also connecting an LED to the breadboard to ensure correct wiring, and it worked. I didn’t connect my buzzer today. And honestly, I don’t think I will until the very end because I got a new buzzer and the legs look extremely delicate and if I’m being honest I’m not the most patient or gentle with any electronic device so I don’t want to risk breaking yet another buzzer from the get go.

STIPEND USAGE:

- Accelerometer: 40 AED: https://www.amazon.ae/AOICRIE-GY-291-ADXL345-Acceleration-Transmission/dp/B09S633YTW/ref=sr_1_1?crid=15F0J7UIXQK35&dib=eyJ2IjoiMSJ9.gDQl-_42Yd4esBPagpxPgPQ1K0jRtEl1-iW409PI-IfKNZ2RDA0hRICe1Ca5CQO83N_NFecaKnxd0M0wb-MUX1e1_oV4-HaxCe8KarHRjisgkRf5YXzLzubvBosNxFh3jF1fxCOIuON14P6KvVqARPChru9DCNpV5LlMncvQg8Ro-_7YjQ8SDpSgTryUpC0bzRT_iYJgE7TdhPJPFwpEfobjkqCHFv6UofjnQWaQvz2MidmSXbQrPRS50HEMsv52y0cumuy0z_MNJD41on7dsMC64UjQqNZ6qDc8AXm-5ME.Jzj2a_U_k_AoU6BZxRHVxhwAFpV0Zcu0PJnDUZjpUM0&dib_tag=se&keywords=GY-291+3-Axis+Accelerometer+ADXL345&qid=1777274584&sprefix=gy-2913-axis+accelerometer+adxl345%2Caps%2C261&sr=8-1

- Jumper wires: 18 AED: https://amzn.eu/d/03P5tjOx

AI USAGE:

I used AI for this project thus far to discuss my ideas and discuss any problems which I may face while building the project.