Concept

My concept is heavily inspired by the show Squid Game and I wanted to make a musical instrument (well I guess in this, more of a music box/player?) where the light controls the mood of the music, so I used one of the songs from a game in the show. I used a photoresistor/LDR where the darker it is, the slower the tempo is to create that “creepy vibe,” and the brighter it is, the normal tempo is played. I also used a red LED when the creepy version is played and a green LED when the normal one is played, these lights’ colors are actually used in the show in another one of the games.

Full Code | Video Demo | Schematics

Code that I’m proud of

ldrValue = analogRead(LDR_PIN); isDark = (ldrValue < DARK_THRESHOLD); tempoMult = isDark ? CREEPY_TEMPO : NORMAL_TEMPO; int duration = (int)((1000.0 / durations[note]) * tempoMult); tone(BUZZER_PIN, melody[note], duration);

I’m proud of this because I had to figure out how to make the LDR play a role in the song without just making turn on and off. First thing I had to consider was that the LDR doesn’t hand me “LOW” or “HIGH” and just gives me a raw number between 0 and 1023, and I had to find my own threshold by covering the sensor with my hand and watching the Serial Monitor until I landed on 420. For the actual song, I decided to use a tempo multiplier because I didn’t want the notes themselves to change (just the feel of them), I multiplied the duration by 2.5 in the dark to make every note hang longer and the whole melody sounds heavier and more unsettling without needing to change the notes.

How this was made

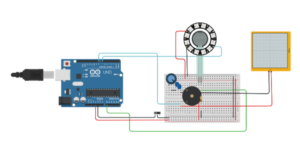

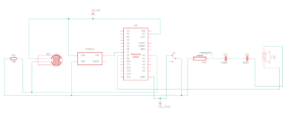

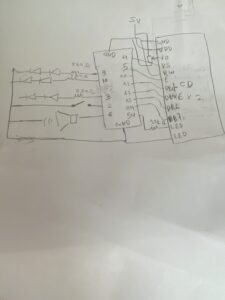

I started with the slide switch wired to pin 2 using INPUT_PULLUP, which meant I didn’t need an external resistor. When the switch is open, the buzzer stops and both LEDs go off straight away. For the LDR I had to build a voltage divider using a 10kΩ resistor because the Arduino can only read voltage and not resistance directly. One leg of the LDR goes to 5V, the other connects to A0 and one leg of the 10kΩ resistor, and the other resistor leg goes to GND. In bright light the LDR resistance drops and A0 reads high, and in darkness the resistance rises and A0 reads low. I landed on 420 as my threshold after some testing with the Serial Monitor (that I temporary added but later removed). The buzzer plays the melody using tone() and I used a code from Github for that melody (referenced below), and the duration of each note gets multiplied by 2.5 in dark conditions to make it creepy. The green LED lights up in bright conditions and the red LED lights up when it goes dark. I also made sure to re-check the LDR and switch inside the melody loop on every single note so the instrument reacts to light changes mid-song rather than waiting for the whole melody to finish before checking again.

Reflection & Future Improvements

Honestly this assignment gave me more trouble than I expected, but in a good way. I went in thinking the LDR would be straightforward as it’s something that I’ve learned from the analog sensor lesson and it kind of was at the hardware level, but making it do something musically interesting took some more thought. The Squid Game inspiration kept me motivated too because I had a clear vision of what I wanted it to feel like, which made the troubleshooting feel worth it rather than frustrating. If I kept going I’d want to include more songs from the different games and have more “modes.” I’d also want to try changing the actual pitch in the dark, dropping notes lower to make it sound even more sinister, which feels very on brand for the Squid Game theme. But for what it is, I’m genuinely happy with how the concept came through.

References

https://docs.arduino.cc/language-reference/en/functions/advanced-io/tone/

For the melody: https://github.com/hibit-dev/buzzer/blob/master/src/movies/squid_game/squid_game.ino