Complete midterm:

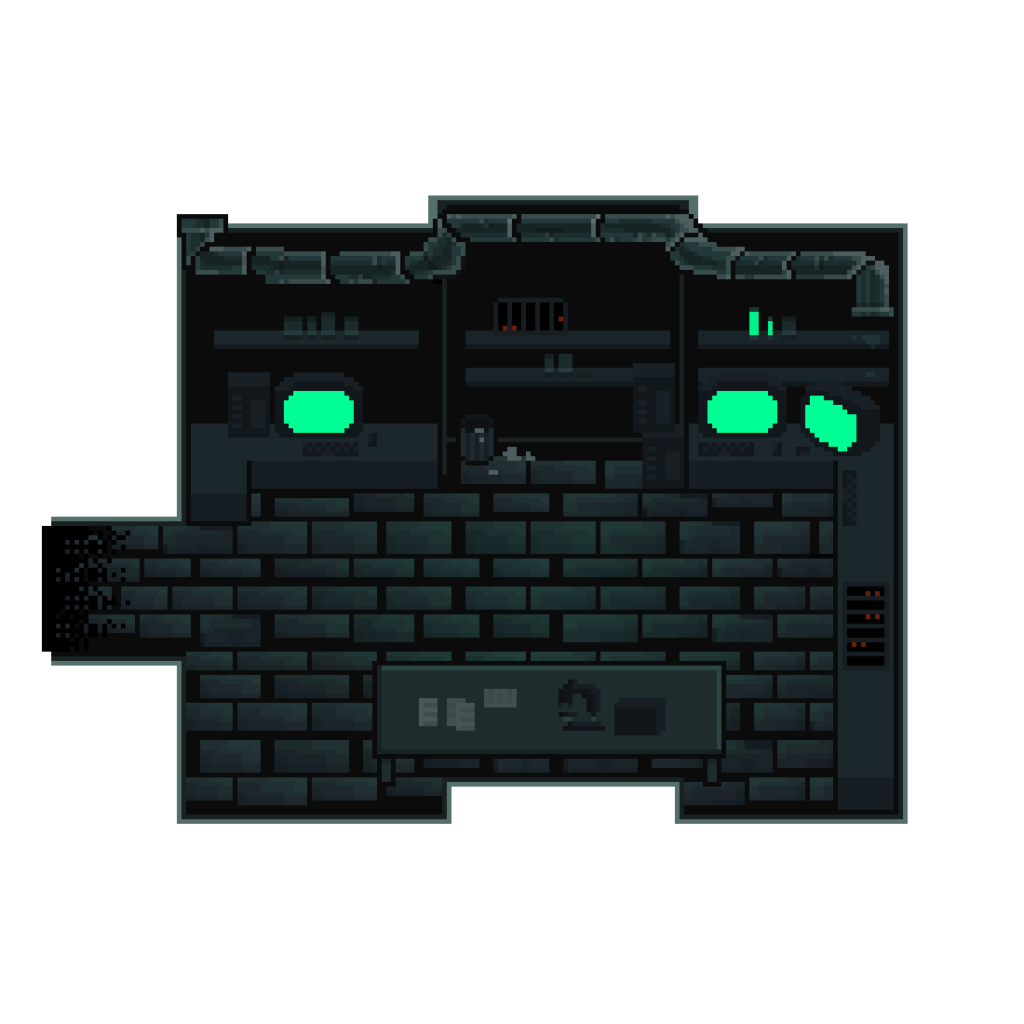

Since the project neeed to be interactive, I was inspired by multiple souces that I have experience, like the interactive haunted Queren Mary story and the film “Night at the Museum”. I deciede to do an interactive storytelling about a spooky story based in the museum setting. I enabled the player to make choices at multiple points in the stories, leading to different endings accordingly. I wrote the story myself and and organized the branching so seemingly safe choices can have unexpected turns.

Surprisingly though, the most difficult part of my project was not actually the coding but having AI genertate images for me. With coding I was able to see what was wrong and physically fix it, and as long as I code it correctly it will do its job. But with AI generating images it sometimes just doesn’t get what I am saying. And since AI don’t actually see the images it really has difficulty when I want it to edit or make changes to the image it generated.

The way my project works is that I put all my scenes in the “playing” gamesate in a giant object called storyData, and made them nested objects. The storyData is coded in an individual .js file. This allows the main code to be organized since it only fetches the information. The properties of the scenes, including: nnames of scenes, their relations, the audio, visual, duration, text, delays and choices for individual parameters are all in the storyData file. Example is below.

storyData.scene1_part3 = {

text: "In the darkness, you hear laughter in the corridors...",

duration: 5000,

textDelay: 1500,

visual: "booth_dark",

audio: "girl_laughter",

choices: [

{ label: "Investigate", next: "scene2_part1" },

{ label: "Stay", next: "scene3_part1" },

],

};

This object file functions throught my drawCurrentScene function, which I am sort of proud of.

function drawCurrentScene() {

background(0);

let scene = storyData[currentSceneId];

//image

if (myImages[scene.visual]) {

let currentImg = myImages[scene.visual];

let aspectRatio = min(

windowWidth / currentImg.width,

windowHeight / currentImg.height

);

let drawWidth = currentImg.width * aspectRatio;

let drawHeight = currentImg.height * aspectRatio;

image(currentImg, windowWidth / 2, windowHeight / 2, drawWidth, drawHeight);

}

//timer

let elapsedTime = millis() - sceneStartTime;

//audio mechanism for deplayed audio. Undelayed audio mechanism is with the changeScene function

if (

scene.audioDelay &&

elapsedTime > scene.audioDelay &&

lateSoundPlayed === false //If the scene has audio delay and time is more than delay and the delayed sound has not been played

) {

mySounds[scene.audio].play(); //play the sound and indicate the delayed sound has been played

lateSoundPlayed = true;

} //This state mechanism for sound prevents if from playing every time draw runs

//text (subtitle) display

let delayTime;

//if the scene has text delay make the delay time that, if not make it 0

//The undelayed text cannot go in changeScene like the audio because it needs to be drawn every frame constantly. It must be in draw.

if (scene.textDelay) {

delayTime = scene.textDelay;

} else {

delayTime = 0;

}

//if time has passed delay,

if (elapsedTime > delayTime) {

//draw the background box for subtitles

rectMode(CENTER);

fill(0, 0, 0, 200);

rect(

windowWidth / 2,

windowHeight * 0.85,

windowWidth * 0.7,

windowHeight * 0.1,

10

);

//drawing the text

fill(255);

noStroke();

textAlign(CENTER, CENTER);

textSize(windowHeight * 0.04);

// 4th parameter limits the max width of the text, keeping it from going out of the box

text(scene.text, windowWidth / 2, windowHeight * 0.85, windowWidth * 0.7);

}

//scene change logic

if (elapsedTime > scene.duration) {

if (scene.autoNext) {

changeScene(scene.autoNext); //If the scener has an automatic connecting scene, change it to next scene

} else {

rectMode(CORNER);

fill(0, 0, 0, 100);

rect(0, 0, windowWidth, windowHeight);

if (choicesDisplayed == false) {

//If it does not have automatic next scene but has choices, draw the black veil and display the choices

displayChoices();

}

}

}

}

The drawCurrentScene function was written so it would work for every scene that has information in the storyData file. It draws the image fulfilling the current window size, creates the scene timer, uses that timer to operate audio and visual delays and scene duration and decide how to change the scene to the next scene based on the parameters of the scene. This allows a smooth flow of the game as if it is a video when the player does not need to interact. When the player does need to make choices, it allows unlimited time on the choice page. Because every scene needs to go through all these processes, coding it in this way allows 50 scenes to go through one function instead of having 50 processes, making the code much easier and much more organized.

It actually also allows super easy editing. If you don’t like any part of the story or want to add or delete anything, because of the existance of this function, you would only need to change or add or delete things in storyData. And since storyData is only storing infotmation, it follows less strict order and organization rules than the main code. Making changes to it would be a lot closer to human language and easier.

I am also quite proud of my logic for updating element position when the canvas is resized. The code actually encorporates multiple functions

function windowResized() {

resizeCanvas(windowWidth, windowHeight);

//resize and reposition button accordingly

//start button

if (gameState === "start" && startBtn) {

//size

let startWidth = max(200, windowWidth * 0.15);

let startHeight = max(50, windowHeight * 0.08);

let startFont = max(20, windowWidth * 0.02);

startBtn.size(startWidth, startHeight);

//positionn

startBtn.position(windowWidth / 2 - startWidth / 2, windowHeight / 2 + 50);

startBtn.style("font-size", startFont + "px");

}

//same for return button

if (gameState === "credits" && returnBtn) {

let btnWidth = max(200, windowWidth * 0.15);

let btnHeight = max(50, windowHeight * 0.08);

let btnFont = max(20, windowWidth * 0.02);

returnBtn.size(btnWidth, btnHeight);

returnBtn.position(windowWidth / 2 - btnWidth / 2, windowHeight * 0.85);

returnBtn.style("font-size", btnFont + "px");

}

//game button

if (choicesDisplayed && choiceButtons.length > 0) {

for (let i = 0; i < choiceButtons.length; i++) {

let btn = choiceButtons[i];

updateButton(btn, i, choiceButtons.length);

}

}

}

I not only changed the canvas size according to window size in this code. I also included the button elements and repositioned them accordingly. I found out when I tried my code the HTML elements do not move like the texts do. They dont stay in the same position relative to the canvas. So I actively coded them to calculate new size and position when the canvas is resized. This function is then called in the buttonFunction function so they would be ready to act every time a button is created.

function buttonFunction() {

//take the value from the button and give it to variable nextScene

let nextScene = this.value();

for (let i = 0; i < choiceButtons.length; i++) {

//remove the buttons from the screen

choiceButtons[i].remove();

}

//empty buttons array for new round of choices

choiceButtons = [];

//reset choice display state

choicesDisplayed = false;

//If next scene is credits

if (nextScene === "credits") {

//change game state in the state mechanism

gameState = "credits";

//display the restart button

returnBtn.show();

//use the windowResized function for reorganizing text and buttons for the credits screen.

windowResized();

} else if (nextScene === "restart") {

//same logic as above

gameState = "start";

startBtn.show();

windowResized();

} else {

//If it is just a choice in the story go with the story logic, the button doesnt need extra function.

changeScene(nextScene);

}

}

windowResized is called after the button is told to show. This way the buttons will always be in the right place no matter when or how the screen size is changed.

function startGame() {

fullscreen(true);

gameState = "playing";

startBtn.hide();

//input any scene here and its a quick portal to the scene

changeScene("intro");

}

I also wanted to mention this code snippet that I found could also serve as a “maitainance platform”. This was originally written to changethe scene from start to intro and game state from start to playing. But change “intro” to any scene name that is in storyData and this serves as a portal to that scene. Without it I would have to needed to go through the whole story every time I changed something and wan to see the effect.

Some areas for improvement include adding fade in/out effects and more animation. When I looked through the game I felt that some scenes may need a gradual introduction effectr which fade in would perfectly suit. I wasn’t able to add that due to the limit of time. I tried to code it but bugs got in the way and I did not have enough time to trouble shoot the whole thing so I just deleted it. The game would also look better with more animation. But it would be near impossible to reproduce the shapes of spirits and ghosts in my current images with p5 code. The better way would be just make the plot a video, and code choices in between. But that would make it diverge from the goal for the midterm.

AI was used to some extent in this work. All the images used in this work was generated by Google Gemini accoirding to my requirements. For code, Gemini helped me with button styling. Because HTML objects were new to me I had trouble figuring out how to style them. Gemini introduced the different codes for button styling and I wrote the styling codes according to the examples provided. It also provided me with the idea of using an array for the buttons on the scene so they can easily be added and removed (line 433-436). (I originally only had array for choices so the buttons just got stuck on the screen). It also helped me with writing “not” in the if function (line 490) because I remembered it as || which was actually “or” and the code failed to work. Gemini also assisted my when I needed to find sound effect and voiceovers. It suggested using Freesound and Elevenlabs for these needs and gave me tutorials on how to use them. At the end ofc my project I also used Gemini for debugging once or twice when I encountered no councel error messages but the game crashing, because it was difficult for me to pick out the error among hundreds of lines of code. AI was used for help and assistance and did not write the code. The code was written based on an understanding of what was taught in class and what AI explained to me.