I want to create an immersive experience with space. I want users to come to an empty canvas and use pressure sensors to add planets, meteors and stars. These stars can be added and let’s say we have a minimum of 5 stars, a star constellation will be created and can grow if the user adds more stars. These will be floating around or soaring by (meteors) and so it feels like you’re in space. I want to have a projector to project the P5 screen so users can really feel the grandeur of it.

The Interaction

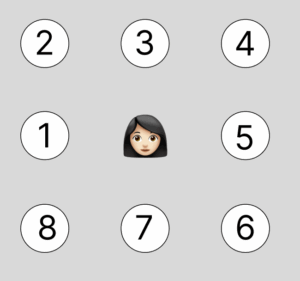

I will have 8 stepping stones on the floor that when the user steps on them they do different things:

Star Makers (Stones 1, 4, 6):

- Quick tap: Adds one small white star to that stone’s constellation

- Each stone creates its own independent star collection

- When 5 stars are placed, they automatically connect with glowing lines to form a constellation

- Can expand up to 9 stars, making the constellation more intricate and complex

- Three unique constellations can exist simultaneously in different regions of the sky

Planet Makers (Stones 2, 5, 7):

- Hold for 3+ seconds: Materializes a planet in space

- Random planet type appears: gas giants, ringed planets, rocky worlds, ice planets, or mysterious alien worlds

- Planets drift randomly through space, floating past your constellations

- Creates a living, breathing solar system

Meteor Makers (Stones 3, 8):

- Quick tap: Shoots one meteor across the sky in a random direction

- Hold for 3+ seconds: Triggers a meteor shower with 5 meteors streaking across space

- Adds dynamic movement and excitement to the scene

Atmosphere Control (Potentiometer dial):

- Physical dial near the stepping area

- Controls both the visual intensity and audio volume

- Low: Clear, minimal space with silence

- High: Rich nebula clouds, cosmic dust, and immersive ambient soundscape

- Gives users creative control over the mood of their universe

The Experience

Users approach a darkened floor projection showing empty space. As they explore the stepping stones, they discover they can build their own universe, star by star, constellation by constellation. The moment when 5 stars connect into a glowing constellation creates a magical sense of achievement. Planets drift through the creations, meteors streak across the sky, and the atmosphere can be dialed from stark and minimal to rich and dramatic.