Sign Sprint

Concept

SignSprint is a game based on computer vision that recognizes 7 different hand gestures which are Thumbs up, Thumbs down, Victory, Pointing Up, Victory, Closed Fist, I love you and Open Palm. The game works on a gesture recognition machine learning model by Google AI for Developers through MediaPipe Studio. The model can be tried out here.

The whole concept of the game is to make as many signs within a specified time period. An array of the possible hand gestures is created and one is randomly displayed at a time and the user is meant to make the hand gesture corresponding to the gesture being displayed. The score of the user is recorded and displayed at the end of the designated time period. The ML model uses measurements to accurately estimate a hand gesture and detect it. A validation condition is used to check if the detected gesture is exactly as the the target gesture and only then will the target gesture change. The model has also been set to detect one hand at a time so using multiple hands will cause the gesture not to be detected.

The main function sets up the machine learning model, detects gesture and randomly selects a target gesture

Code I am proud of

function drawGame() {

background(0);

image(video, 0, 0, width, height);

// Timing game

let elapsed = (millis() - startTime)/1000;

if (elapsed >= gameTime) {

gamestate = "end";

}

// Gesture detected & scoring

let detectedName;

let detectedEmoji;

if (results && results.gestures && results.gestures.length > 0) {

detectedName = results.gestures[0][0].categoryName;

detectedEmoji = gestureMap[detectedName];

if (targetGesture.name == detectedName && !matchCooldown) {

score++;

// sound for feedback

matchCooldown = true;

correct.play();

pickNewTarget();

}

}

// Target Emoji

if (targetGesture) {

textFont("OpenSans");

textAlign(CENTER, TOP);

textSize(70);

text(targetGesture.emoji, width/2, 30);

}

// Score

textFont(font);

fill(255);

textAlign(RIGHT, TOP);

textSize(30);

textFont("OpenSans")

text("⭐", width-55, height-45);

textFont(font);

text(score, width-20, height-45);

// Time remaining

textSize(40);

text(ceil(gameTime-elapsed), width-20, 20)

}

The code I am proud of is the drawGame function. This is the function that contains the bulk of the game mechanism. It first shows the camera output of the the program, shows the target gesture as an emoji, detects the gesture of the player through the camera and checks if it is the same as the required gesture. If the detected and target emojis are the same, it increases the score and creates a new target. The function also displays the time left and the current score on the screen. Finally the function has a condition that automatically switches the game to the end screen when the specified time is elapsed.

How it was made

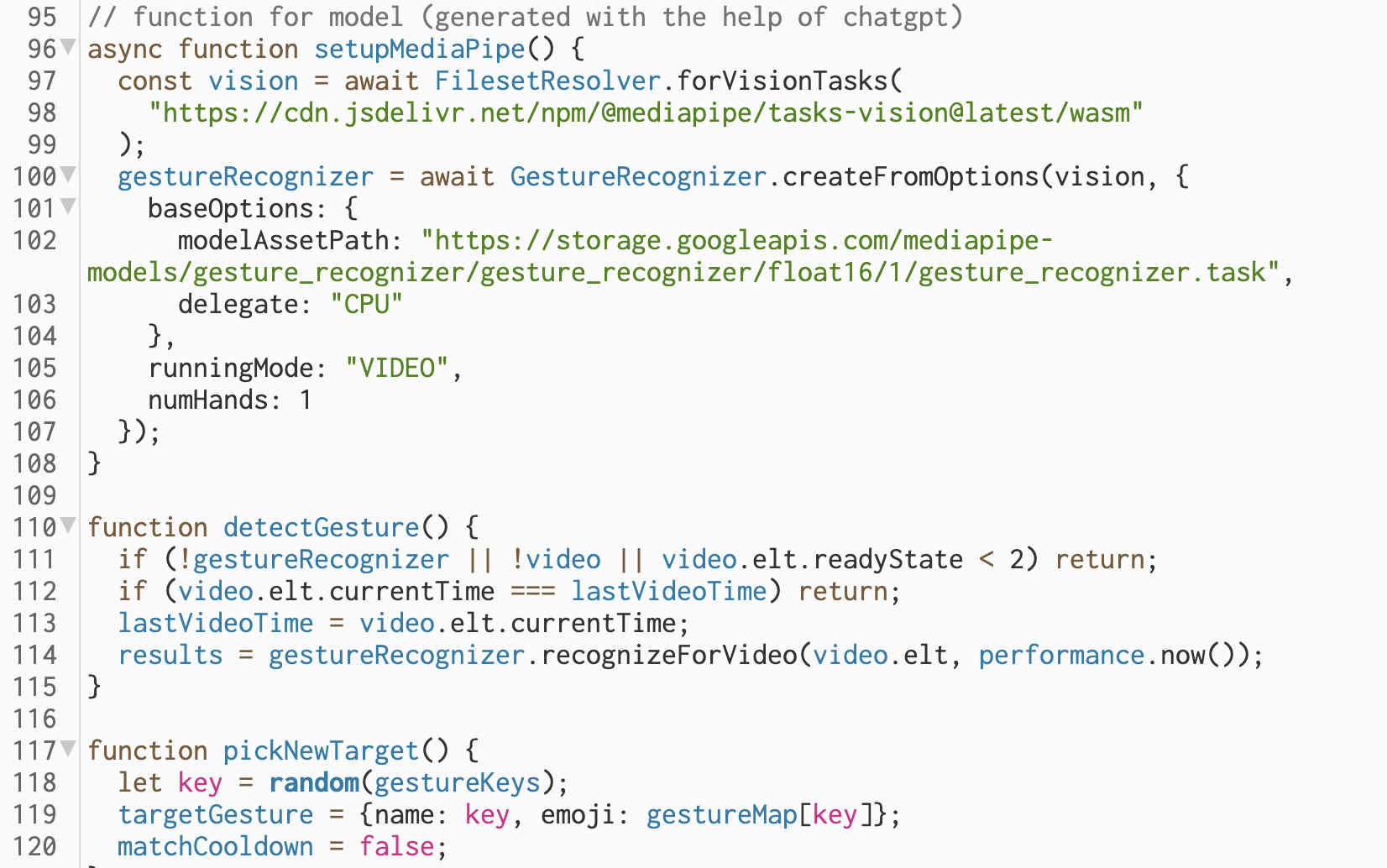

The game mainly runs on the the hand gesture machine learning model which was stated above. The biggest challenge in making this game was importing the gesture recognition model in p5js. I used cluade AI to help in this process. With the help of AI, I was able to modify the html file and create functions in order to import the right model into the p5js file which enables us to run the game. Claude AI was also used in the making of the setupMediaPipe and detectGesture() function to enable the game run the scoring system.

The game code was mostly composed of if conditions and booleans for the game logic. The start and end screen background was fully generated by Gemini AI and the sounds for the game, which are the theme sound and the correct matching sound were obtained from Freesound.

Reflection

This was a really fun game to create. I got to explore all the concepts deal with class and I got a greater understanding of decisions structures and also learn how import models into p5js. A possible improvement in the increasing the number of hands that can be in the game and the hand gesture can be further developed to remotely control other computer devices to generate art and just express creativity. I see this project as stepping stone to explore my interest in computer vision and its possible application in interactive media and I am excited to see how I can blend this knowledge and skill with physical computing.