Concept & User Interaction

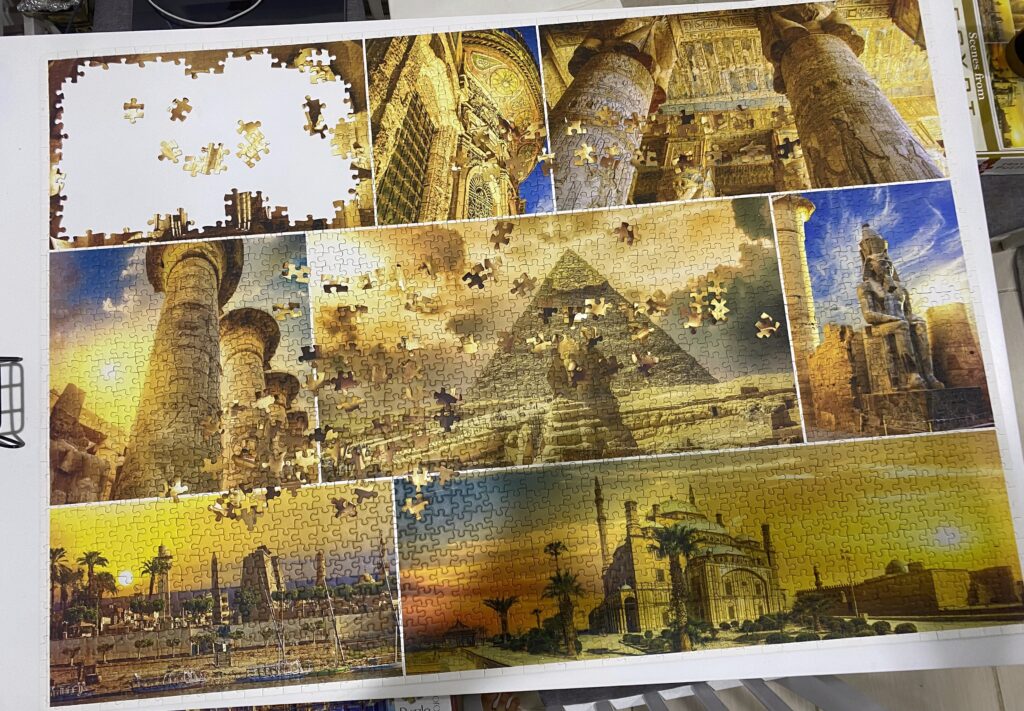

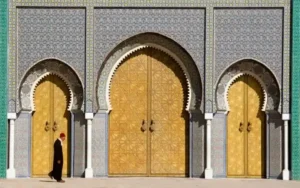

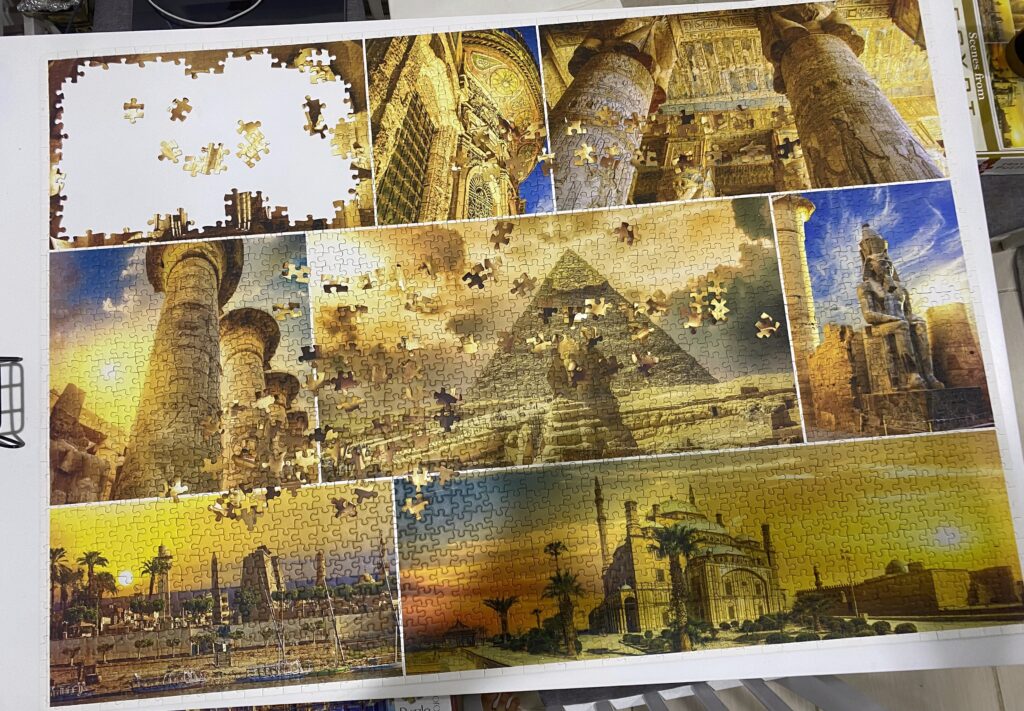

I was very hesitant on an idea from my midterm. I really wanted to incorporate something that was personal to me and create a game that I could imagine my younger siblings being able to play. The idea I ended up with is: a puzzle game! I love solving jigsaw puzzles, so when I thought of doing something that would digitalize that, I was immediately excited about the idea. In addition to that, I decided to give it an Ancient Egypt theme! The last puzzle I solved consisted of landmarks in Egypt (image below), so inspired by that, the images that the user can solve as a puzzle in my game will be Egyptian landmarks (but more cartoon-like).

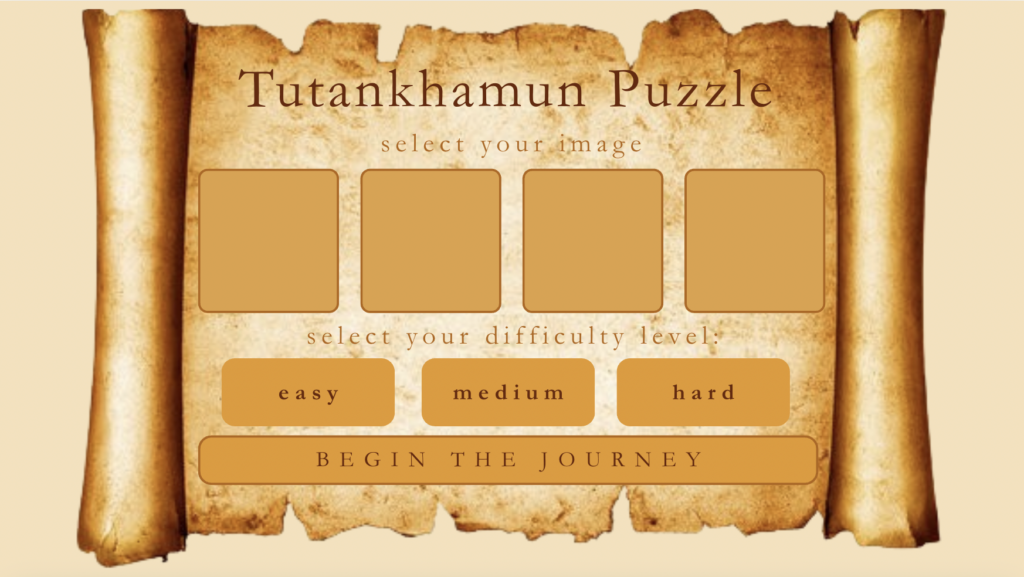

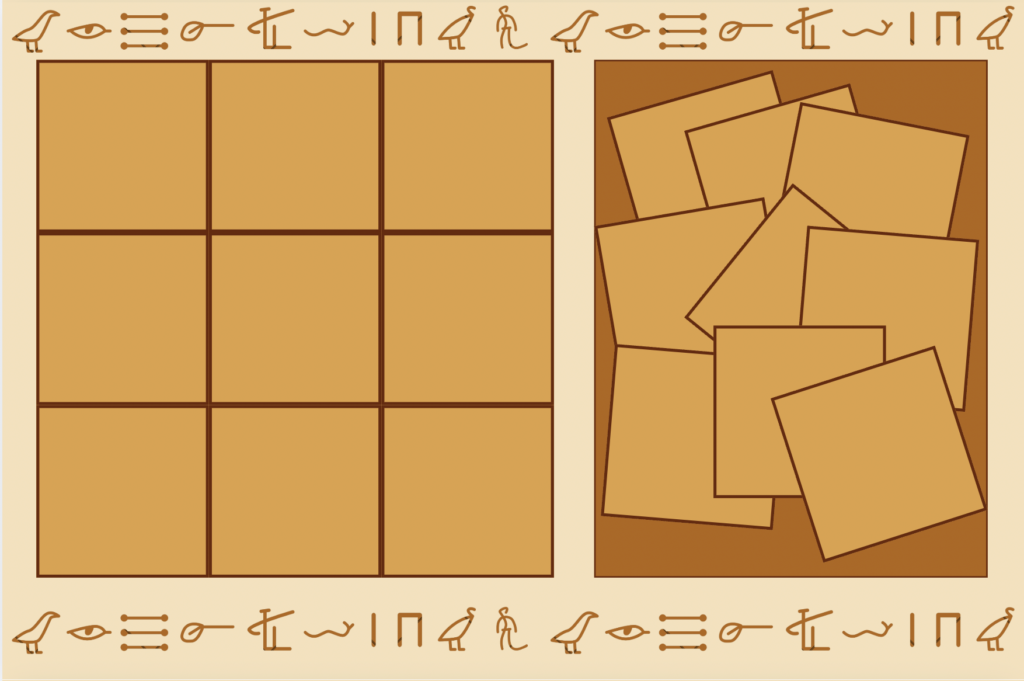

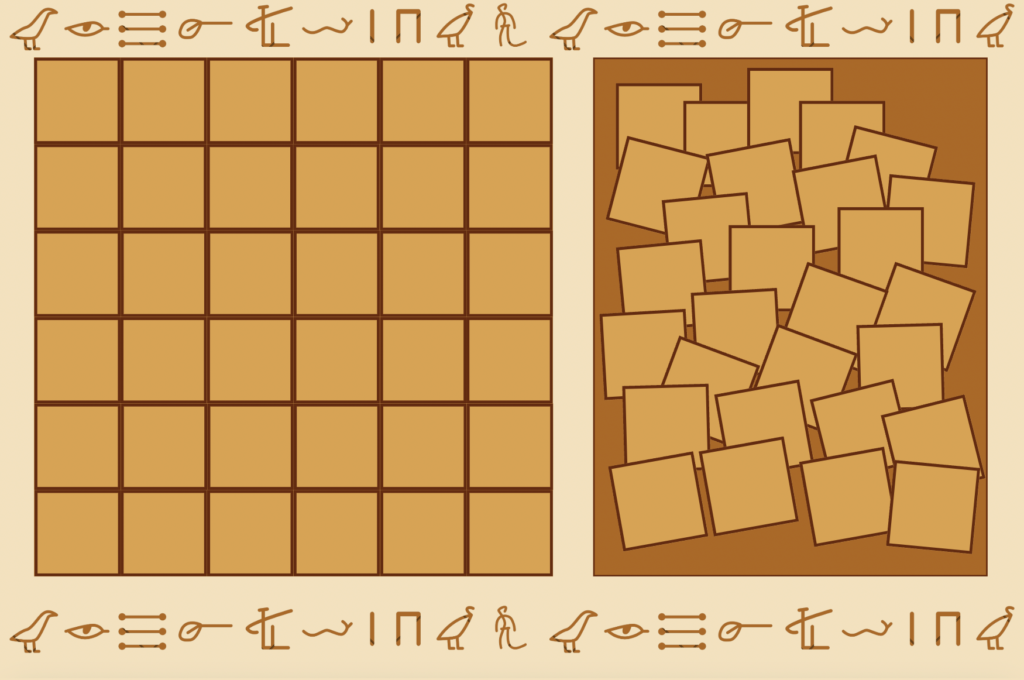

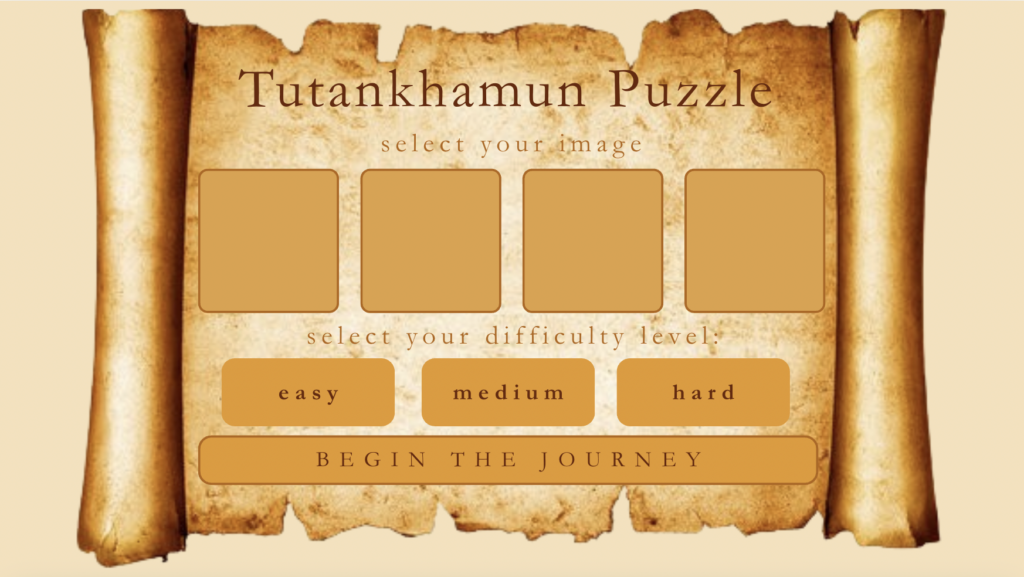

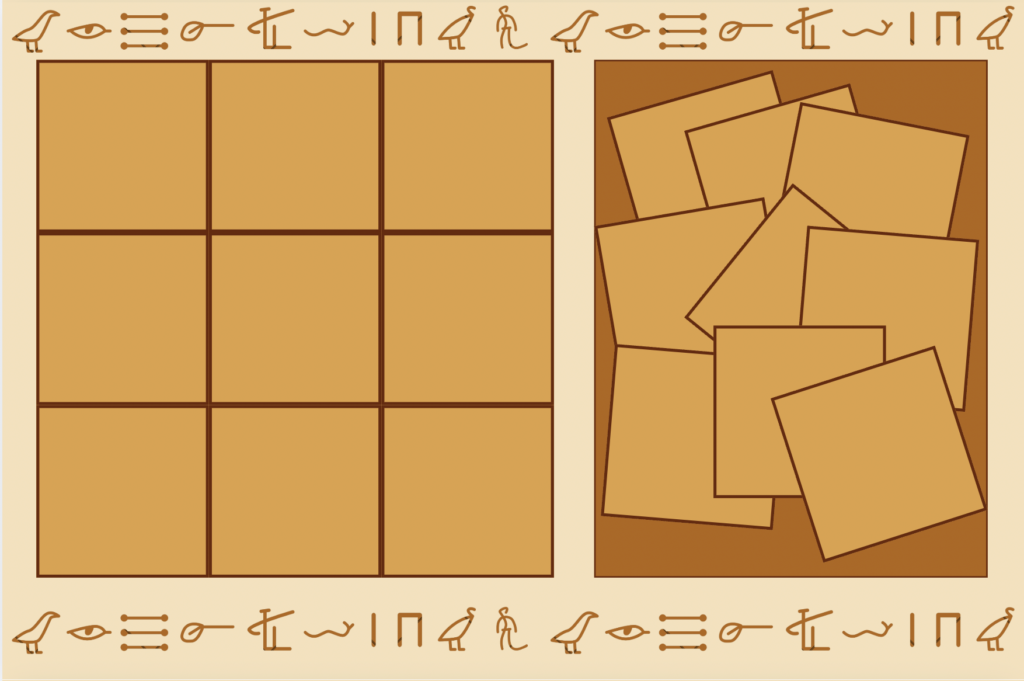

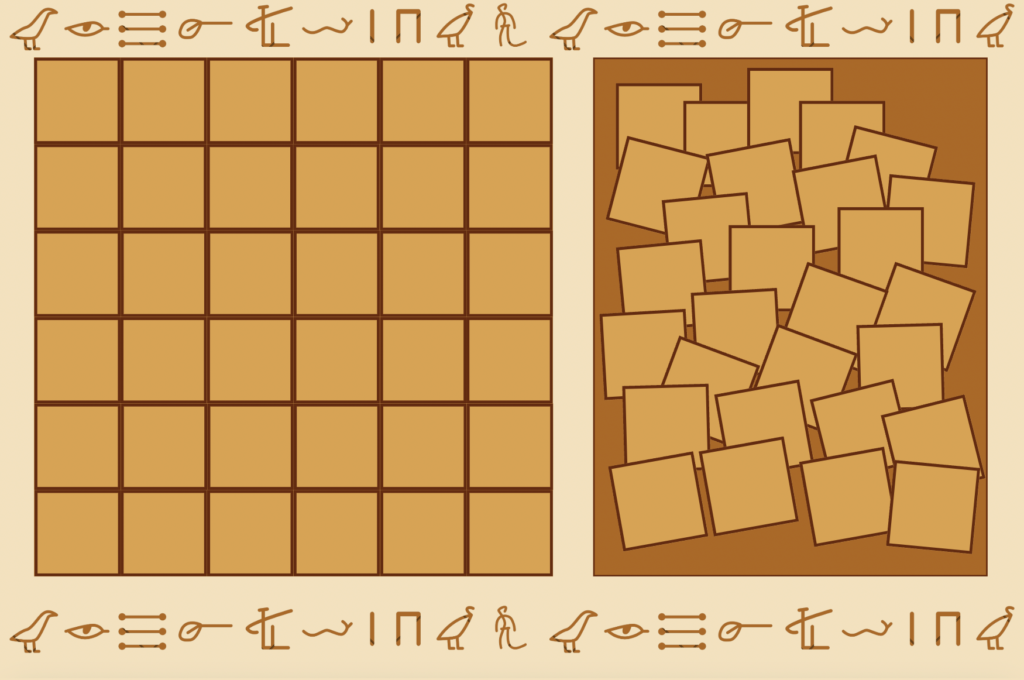

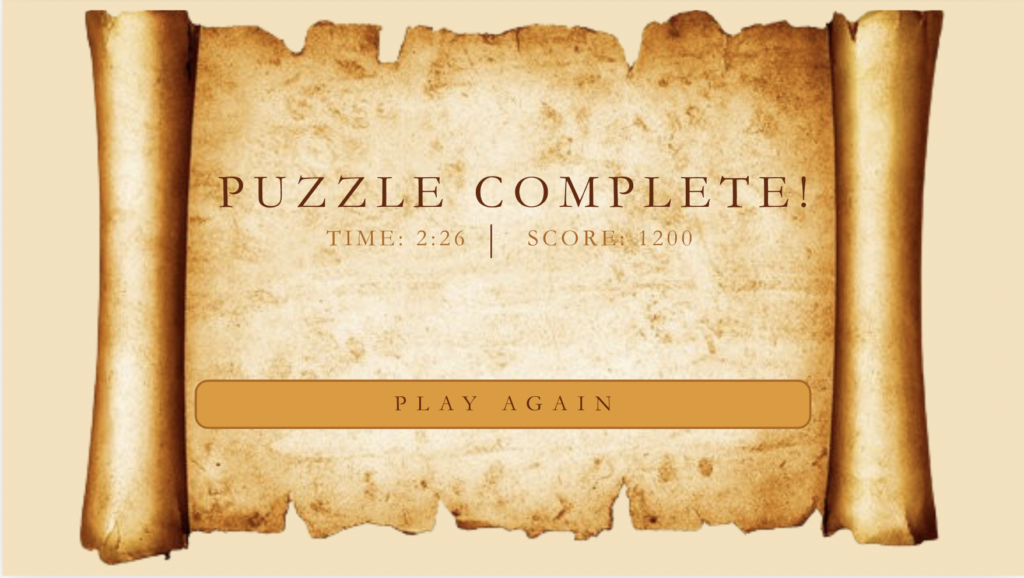

The first thing I started working on was the design. I feel even though we spend a lot of time on p5.js in class, the design and aesthetics of our projects really matter as it is what users will perceive first. So, I went to Canva and started working on what I want to my game to look like. I also started looking for fonts that would match my theme. However, since I don’t have Canva Pro, I wasn’t able to incorporate the fonts I found online into my designs, but I will definitely be using them in my code. Something that is missing in my design but I definitely plan to add into my final implementation is a reference image for the user to refer to on the game screens. It was a bit difficult to get everything to fit into the dimensions provided by Canva, however, I will try to work more on the layout in the coding stage.

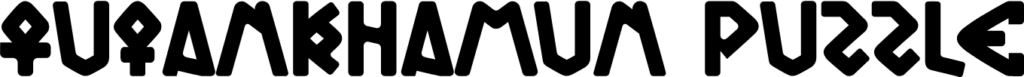

The font I’m planning to use would look something like this if used for the title:

I’m still debating if its a bit illegible or if it fits the theme nicely. For now, I’ll just have it as a possible option, because I feel the regular serif fonts can be a bit boring.

I’m still debating if its a bit illegible or if it fits the theme nicely. For now, I’ll just have it as a possible option, because I feel the regular serif fonts can be a bit boring.

After design, the next question is obviously user interaction and the actual code. The user interaction in my program is pretty obvious, the user selects their puzzle image, their difficulty, and then they solve the puzzle by moving the pieces from the right side (consisting of the jumbled up pieces) to the left side. Initially, when I was thinking about my idea, the picture I had in my head was jigsaw-like shaped pieces, however, I felt that actually implementing that in p5.js might be a bit too troublesome so I settled for simply splitting the image into smaller squares. Then, thinking about how the pieces would go onto the solved board, I thought that it would make sense if the user moves the piece close to where it actually is in the image, the piece would snap into place (paired with appropriate sound effects). I think that is the most satisfying way to gamify this interaction, and I can already think about what the code for that could like. In addition to sound effects, I’m planning to add some background music to each screen, probably relating back to the image selected for the puzzle. Finally, on the ending screen, I will probably match that with a celebratory sound effect, and confetti. For the confetti, I’m imagining Egyptian hieroglyphs raining down, as that would link back to theme I’m going for and it would be a unique idea. However, I still have to think more about the implementation of that.

In terms of code, I haven’t been able to make much progress, however, I’ve started to watch a video Coding Train and Patt Vira videos to understand how to code the mouse interactions I’m thinking of.

Most frightening or uncertain part of my midterm project

The most frightening part of my midterm project so far is how to really implement my ideas into code in an efficient, simple way. There are two main interactions I’m intimidated by, 1 – Splitting the images into smaller squares, especially maintaining the aspect ratio and quality of the images and 2- Snapping of the pieces into place when solving the puzzle. For the former, I believe The Coding Train has done a video on a similar concept so I hope I can refer to his tutorials to understand the code, and the latter, I believe will become more familiar through trial and error. Another thing I’m concerned about that’s less technical and more about aesthetics, is finding appropriate assets (i.e. images, background music, sound effects) as that is what most non-technical users will notice, rather than, the code. To overcome this, I’ve already started searching for ideas and looking at past projects to see what resources they used to find their assets. Luckily, we also live in the age of AI, so if it comes down to it, I may have to use AI-generated images to find exactly what I’m looking for, however, my preference is that the images are more realistic.