Concept:

My project explores the fascinating intersection between physical interaction and emergent systems through a digital flocking simulation. Inspired by Craig Reynolds’ “Boids” algorithm, I’m creating an interactive experience where users can manipulate a flock of virtual entities using both hand gestures and physical controls. The goal is to create an intuitive interface that allows people to “conduct” the movement of the flock, experiencing how simple rules create complex, mesmerizing patterns.

The simulation displays a collection of geometric shapes (triangles, circles, squares, and stars) that move according to three core flocking behaviors: separation, alignment, and cohesion. Users can influence these behaviors through hand gestures detected by a webcam and physical controls connected to an Arduino.

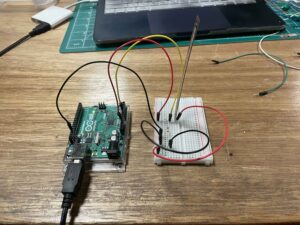

Arduino Integration Design

The Arduino component of my project will create a tangible interface for controlling specific aspects of the flocking simulation:

- Potentiometer Input:

- Function: Controls the movement speed of all entities in the flock

- Implementation: Analog reading from potentiometer (0-1023)

- Communication: Raw values sent to P5 via serial communication

- P5 Action: Values mapped to speed multiplier (0.5x to 5x normal speed)

- Button 1 – “Add” Button:

- Function: Adds new entities to the simulation

- Implementation: Digital input with debouncing

- Communication: Sends “ADD” text command when pressed

- P5 Action: Creates 5 new boids at random positions

- Button 2 – “Remove” Button:

- Function: Removes entities from the simulation

- Implementation: Digital input with debouncing

- Communication: Sends “REMOVE” text command when pressed

- P5 Action: Removes 5 random boids from the simulation

The Arduino code will continuously monitor these inputs and send the appropriate data through serial communication at 9600 baud. I plan to implement debouncing for the buttons to ensure clean signals and reliable operation.

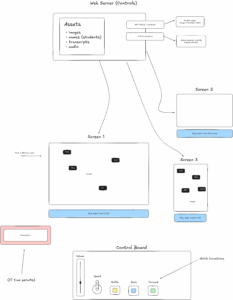

P5.js Implementation Design

The P5.js sketch handles the core simulation and multiple input streams:

- Flocking Algorithm:

- Three steering behaviors: separation (avoidance), alignment (velocity matching), cohesion (position averaging)

- Adjustable weights for each behavior to change flock characteristics

- Four visual representations: triangles (default), circles, squares, and stars

- Hand Gesture Recognition:

- Uses ML5.js with HandPose model for real-time hand tracking

- Left hand controls shape selection:

- Index finger + thumb pinch: Triangle shape

- Middle finger + thumb pinch: Circle shape

- Ring finger + thumb pinch: Square shape

- Pinky finger + thumb pinch: Star shape

- Right hand controls flocking parameters:

- Middle finger + thumb pinch: Increases separation force

- Ring finger + thumb pinch: Increases cohesion force

- Pinky finger + thumb pinch: Increases alignment force

- Serial Communication with Arduino:

- Receives and processes three types of data:

- Analog potentiometer values to control speed

- “ADD” command to add boids

- “REMOVE” command to remove boids

- Provides visual indicator of connection status

- User Interface:

- Visual feedback showing connection status, boid count, and potentiometer value

- Dynamic gradient background that subtly responds to potentiometer input

- Click-to-connect functionality for Arduino communication

Current Progress

So far, I’ve implemented the core flocking algorithm in P5.js and set up the hand tracking system using ML5.js. The boids respond correctly to the three steering behaviors, and I can now switch between different visual representations.

I’ve also established the serial communication framework between P5.js and Arduino using the p5.webserial.js library. The system can detect previously used serial ports and automatically reconnect when the page loads.

For the hand gesture recognition, I’ve successfully implemented the basic detection of pinch gestures between the thumb and different fingers. The system can now identify which hand is which (left vs. right) and apply different actions accordingly.

Next steps include:

- Finalizing the Arduino circuit with the potentiometer and two buttons

- Implementing proper debouncing for the buttons

- Refining the hand gesture detection to be more reliable

- Adjusting the flocking parameters for a more visually pleasing result

- Adding more visual feedback and possibly sound responses

The most challenging aspect so far has been getting the hand detection to work reliably, especially distinguishing between left and right hands consistently. I’m still working on improving this aspect of the project.

I believe this project has exciting potential not just as a technical demonstration, but as an exploration of how we can create intuitive interfaces for interacting with complex systems. By bridging physical controls and gesture recognition, I hope to create an engaging experience that allows users to develop an intuitive feel for how emergent behaviors arise from simple rules.